Art with Heart 2018

Could a custom Style Transfer convolutional neural network help feed people?

Thanks to Cafe 180 in Englewood, Colorado and a BETA photo re-painting charity event, it sure has. Thank you so much to all those who participated in this crazy experiment and to Cafe 180 for providing the food.

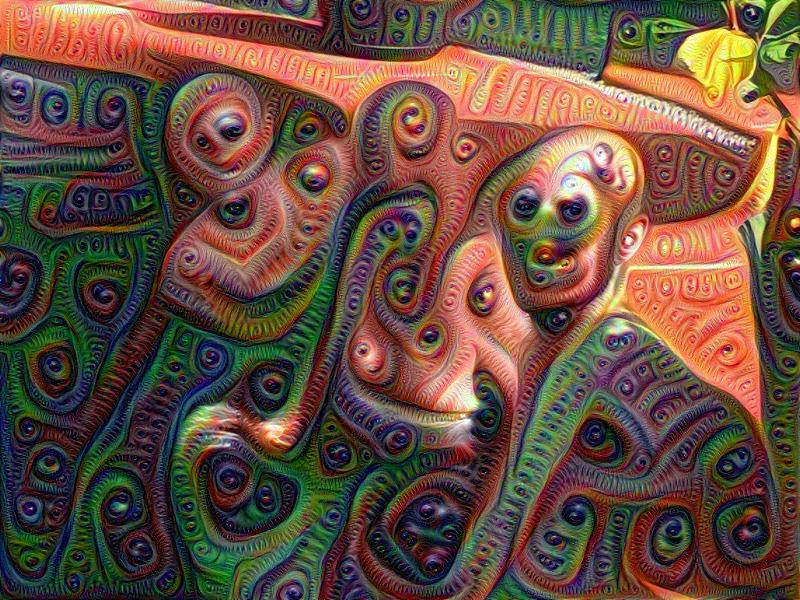

The Style Transfer technique has roots in Google's Deep Dream, which itself came out of research originally intended to understand how machine learning's black box worked for identifying visual objects. The first few layers of a digital neural network kind of make sense. Convolutional matrix transforms are done with paint programs like Photoshop to do simple things like emboss or otherwise highlight edges in an object. Deeper into the Convolutional Neural Networks, however, it got a little weird.

Image Source from Wikipedia By jessica mullen from austin, tx - Deep Dreamscope, CC BY 2.0

Image Source from Wikipedia By jessica mullen from austin, tx - Deep Dreamscope, CC BY 2.0

Tinman's focus is on empowering people through technology. By taking photos at or prior to the event, we helped even those of us who are artistically challenged into abstract painters. And, by partnering with an amazing charity like Cafe 180, we also supported a really important and impactful community program that provides nutritious choice in food with a pay what you can, when you can model.

Subscribe to Tinman Kinetics

Get the latest posts delivered right to your inbox